AI Security: The Threat Nobody Takes Seriously

IN ONE SENTENCE

As AI handles critical tasks; emails, documents, decisions; vulnerabilities become real business risks. Ignoring them is leaving the door wide open.

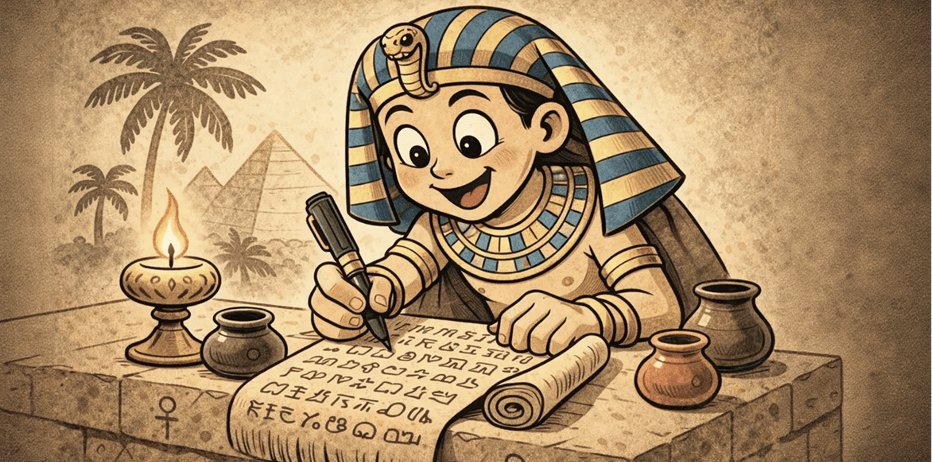

THE OBSERVATION

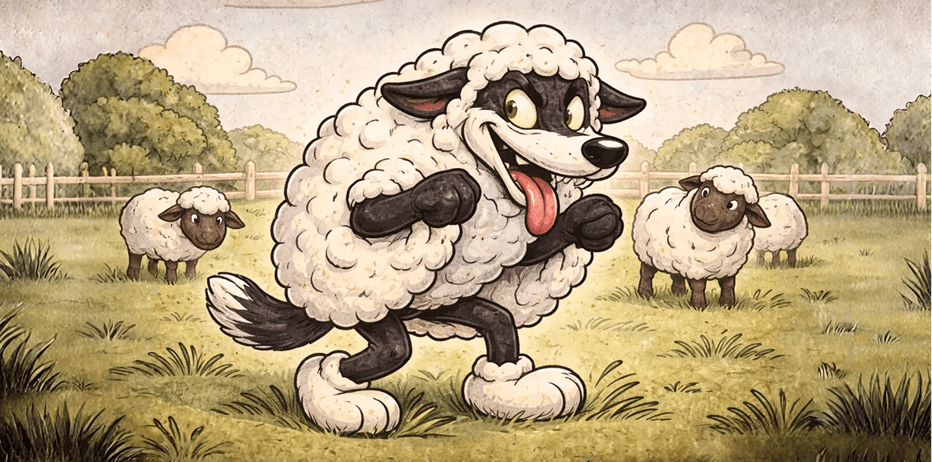

AI models don't always distinguish legitimate instructions from malicious ones slipped into content. An innocuous document can contain hidden directives that hijack the system's behavior.

The more autonomous the AI and the more sensitive data it handles, the wider this attack surface becomes. An agent processing job applications, negotiating contracts, or managing financial transactions is a prime target.

WHAT YOU NEED TO UNDERSTAND

AI security is not a technical topic reserved for developers. It's a business topic:

- Every agent must have limited permissions, principle of least privilege.

- Irreversible actions (sending emails, payments, deletions) must always require human validation.

- User inputs must be treated as untrusted by default, exactly as in web development.

- Regular audits of agent behavior help detect drift before it becomes an incident.

WHAT THIS CHANGES FOR YOU

- Never deploy an agent with full access without guardrails. Start restrictive, expand gradually.

- Test your AI systems with adversarial scenarios; try to trick them before someone else does.

- Integrate AI security into your quarterly risk reviews, alongside traditional cybersecurity.

The more powerful and autonomous an AI system, the more dangerous its flaws. Security isn't optional; it's the price of autonomy.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)