The Jagged Frontier of AI

IN ONE SENTENCE

AI is brilliant in some areas and mediocre in others, and no one can predict where the boundary lies.

THE OBSERVATION

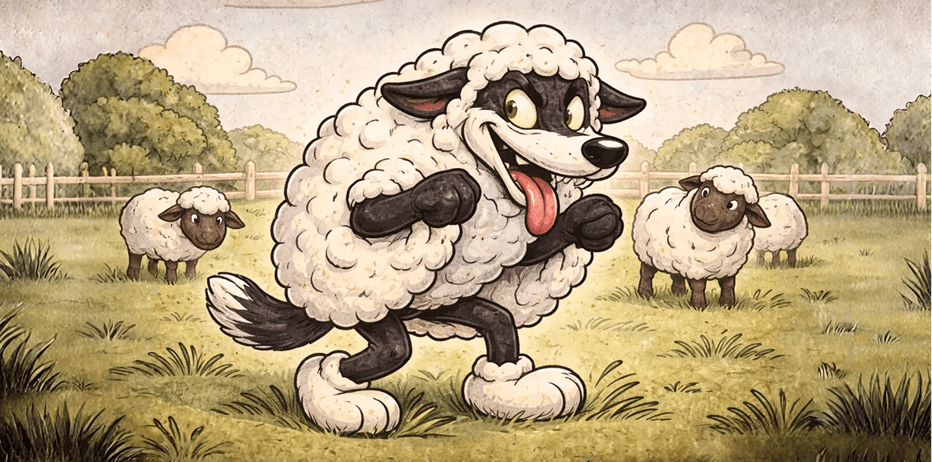

Ethan Mollick introduced the concept of the "jagged frontier" to describe a phenomenon every company using AI eventually encounters: AI models don't progress uniformly. The same model can outperform an expert in financial analysis while failing at a common-sense task an intern would ace. This irregularity isn't a temporary bug: it's the very nature of these systems.

The problem gets worse: this frontier constantly shifts. With every new model version, some weaknesses disappear while new ones appear. Companies that build entire processes around the limitations of a given model end up with obsolete systems as soon as the next update drops.

WHAT YOU NEED TO UNDERSTAND

Test task by task, not as a whole

The most common mistake is globally deciding that AI works or doesn't work for a department. The reality is that each individual task must be evaluated separately. An AI tool can excel at writing meeting summaries but fail at producing reliable sales forecasts; in the same department, for the same user.

Don't over-invest in workarounds

Mollick warns: if you invest heavily to compensate for AI's current weaknesses, you risk ending up with a legacy system built around a jagged frontier that no longer exists. The key is building flexible architectures that can evolve with the models, while accepting that some tasks remain out of reach for now.

The self-driving car analogy

Mollick compares the situation to self-driving cars: systems that are superhuman in some situations but stumble in others. Deployment took years not because the technology was bad, but because this irregularity made trust difficult to calibrate.

WHAT THIS CHANGES FOR YOU

- Implement task-by-task evaluations rather than department-wide: create your own Turing test for each critical process

- Build your AI workflows modularly so you can swap components as models evolve

- Accept the dual bet: fix current weaknesses while betting that many will disappear with future versions

- Train your domain experts to identify where AI excels and where it fails in their field

AI's jagged frontier is not an obstacle to work around: it's a reality to integrate into your strategy. The companies that succeed will be those that systematically test, build flexibly, and accept that the map of AI capabilities is constantly being redrawn. Source: Ethan Mollick, Strange Loop Podcast (Sana Labs), June 2025.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)