Your Results Depend on the Precision of Your Instructions

IN ONE SENTENCE

The quality of what AI produces is directly proportional to the quality of what you ask. A vague instruction gives a generic result. A precise instruction gives an actionable result.

THE OBSERVATION

At NODS, we observe the same pattern with every new client: "We tested AI and the results were mediocre." When we look at their queries, it's systematically the same problem; vague instructions, no context, no format constraints, no precision about the expected output.

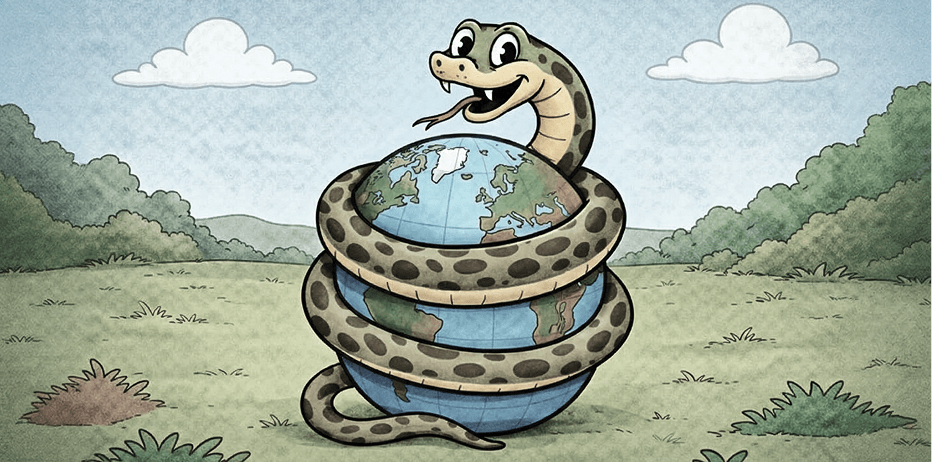

The model doesn't interpret your intentions. It works with the information you provide. The less you give, the more it fills gaps with generic probabilities.

WHAT YOU NEED TO UNDERSTAND

A good prompt contains at minimum:

#

WHAT THIS CHANGES FOR YOU

- Treat each query like a creative brief. The better the brief, the fewer back-and-forths.

- Create prompt templates for your recurring tasks; it's an investment that pays off from the first week.

- Test, iterate, refine. The perfect first-try prompt doesn't exist, but a prompt optimized over three iterations beats any improvised prompt.

AI isn't telepathic. It's literal. The precision of your request is the only lever you control 100%. Invest in it.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)